stanford机器学习_Linear Regression与Logistic Regression(3)

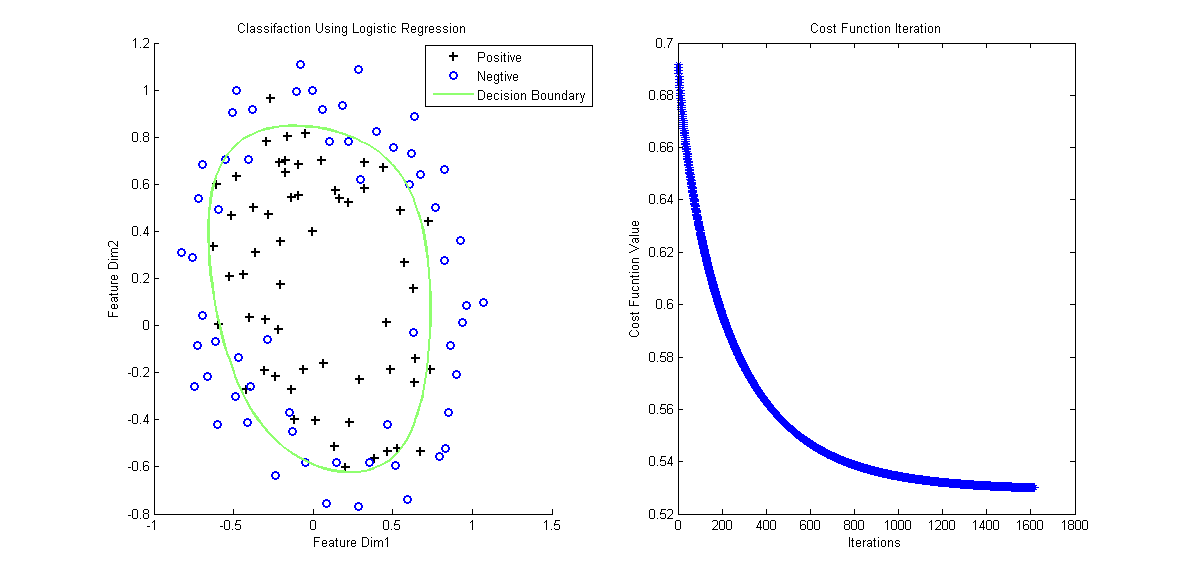

电脑杂谈 发布时间:2016-04-22 09:03:43 来源:网络整理最后来个例子,样本数据链接,对应的含泛化项和特征映射的matlab分类代码如下:

function LogisticEx2 clear all; close all clc data = load('ex2data2.txt'); x = data(:,1:2); y = data(:,3); % Plot Original Data figure, positive = find(y==1); negtive = find(y==0); subplot(1,2,1); hold on plot(x(positive,1), x(positive,2), 'k+', 'LineWidth',2, 'MarkerSize', 7); plot(x(negtive,1), x(negtive,2), 'bo', 'LineWidth',2, 'MarkerSize', 7); % Compute Likelihood(Cost) Function [m,n] = size(x); x = mapFeature(x); theta = zeros(size(x,2), 1); lambda = 1; [cost, grad] = cost_func(theta, x, y, lambda); threshold = 0.53; alpha = 10^(-1); costs = []; while cost > threshold theta = theta + alpha * grad; [cost, grad] = cost_func(theta, x, y, lambda); costs = [costs cost]; end % Plot Decision Boundary hold on plotDecisionBoundary(theta, x, y); legend('Positive', 'Negtive', 'Decision Boundary') xlabel('Feature Dim1'); ylabel('Feature Dim2'); title('Classifaction Using Logistic Regression'); % Plot Costs Iteration % figure, subplot(1,2,2);plot(costs, '*'); title('Cost Function Iteration'); xlabel('Iterations'); ylabel('Cost Fucntion Value'); end function f=mapFeature(x) % Map features to high dimension degree = 6; f = ones(size(x(:,1))); for i = 1:degree for j = 0:i f(:, end+1) = (x(:,1).^(i-j)).*(x(:,2).^j); end end end function g=sigmoid(z) g = 1.0 ./ (1.0+exp(-z)); end function [J,grad] = cost_func(theta, X, y, lambda) % Computer Likelihood Function and Gradient m = length(y); % training examples hx = sigmoid(X*theta); J = (1./m)*sum(-y.*log(hx)-(1.0-y).*log(1.0-hx)) + (lambda./(2*m)*norm(theta(2:end))^2); regularize = (lambda/m).*theta; regularize(1) = 0; grad = (1./m) .* X' * (y-hx) - regularize; end function plotDecisionBoundary(theta, X, y) hold on if size(X, 2) <= 3 % Only need 2 points to define a line, so choose two endpoints plot_x = [min(X(:,2))-2, max(X(:,2))+2]; % Calculate the decision boundary line plot_y = (-1./theta(3)).*(theta(2).*plot_x + theta(1)); % Plot, and adjust axes for better viewing plot(plot_x, plot_y) % Legend, specific for the exercise legend('Admitted', 'Not admitted', 'Decision Boundary') axis([30, 100, 30, 100]) else % Here is the grid range u = linspace(-1, 1.5, 50); v = linspace(-1, 1.5, 50); z = zeros(length(u), length(v)); % Evaluate z = theta*x over the grid for i = 1:length(u) for j = 1:length(v) z(i,j) = mapFeature([u(i), v(j)])*theta; end end z = z'; contour(u, v, z, [0, 0], 'LineWidth', 2) end end

我们再回过头来看Logistic问题:对于非线性的问题,只不过使用了一个叫Sigmoid的非线性映射成一个线性问题。那么,除了Sigmoid函数,还有其它的函数可用吗?Andrew Ng老师还讲了指数函数族。

以上就是关于stanford机器学习的全部内容,相信你一定会非常满意。

本文来自电脑杂谈,转载请注明本文网址:

http://www.pc-fly.com/a/shenmilingyu/article-2489-3.html

相关阅读

发表评论 请自觉遵守互联网相关的政策法规,严禁发布、暴力、反动的言论

每日福利

如果计算机电源不足怎么办,会影响图形卡的性能

如果计算机电源不足怎么办,会影响图形卡的性能 显卡什么牌子质量好?gtx2060冰龙超级冰龙翻车了

显卡什么牌子质量好?gtx2060冰龙超级冰龙翻车了 Nvidia RTX3090公版对比图暴露,巨大的外壳,三插槽超厚卡身

Nvidia RTX3090公版对比图暴露,巨大的外壳,三插槽超厚卡身 很引人注目的电子产品2016年最新推出显卡大全

很引人注目的电子产品2016年最新推出显卡大全

我5s升了9