linux日志文件在哪 大数据集群常见问题总结(4)

电脑杂谈 发布时间:2018-02-20 23:29:49 来源:网络整理atorg.apache.hadoop.dfs.DFSClient$DFSOutputStream.processDatanodeError(DFSClient.java:2158)at org.apache.hadoop.dfs.DFSClient$DFSOutputStream.access$1400(DFSClient.java:1735

at org.apache.hadoop.dfs.DFSClient$DFSOutputStream$DataStreamer.run(DFSClient.java:1889)

java.io.IOException: Could not get block locations. Aborting…at org.apache.hadoop.dfs.DFSClient$DFSOutputStream.processDatanodeError(DFSClient.java:2143)

at org.apache.hadoop.dfs.DFSClient$DFSOutputStream.access$1400(DFSClient.java:1735)

at org.apache.hadoop.dfs.DFSClient$DFSOutputStream$DataStreamer.run(DFSClient.java:1889)

问题原因是linux机器打开了过多的文件导致。解决方法:用命令ulimit -n可以发现linux默认的文件打开数目为1024,修改/ect/security/limit.conf,增加hadoop soft 65535,再重新运行程序(最好所有的datanode都修改),问题解决。

12. bin/hadoop jps后报如下异常:

Exception in thread "main" java.lang.NullPointerException

atsun.jvmstat.perfdata.monitor.protocol.local.LocalVmManager.activeVms(LocalVmManager.java:127)atsun.jvmstat.perfdata.monitor.protocol.local.MonitoredHostProvider.activeVms(MonitoredHostProvider.java:133)at sun.tools.jps.Jps.main(Jps.java:45)

解决方法:系统根目录/tmp文件夹被删除了。重新建立/tmp文件夹即可。

13. bin/hive中出现 unable to create log directory /tmp/

解决办法:系统根目录/tmp文件夹被删除了。linux日志文件在哪重新建立/tmp文件夹即可。

14.MySQL报错

[root@localhost mysql]# ./bin/mysqladmin -u root password ‘123456‘

./bin/mysqladmin: connect to server at ‘localhost‘ failed

error: ‘Can‘t connect to local MySQL server through socket ‘/tmp/mysql.sock‘ (2)‘

Check that mysqld is running and that the socket: ‘/tmp/mysql.sock‘ exists!

本文来自电脑杂谈,转载请注明本文网址:

http://www.pc-fly.com/a/ruanjian/article-86344-4.html

-

-

孙同一

孙同一脸皮可真厚

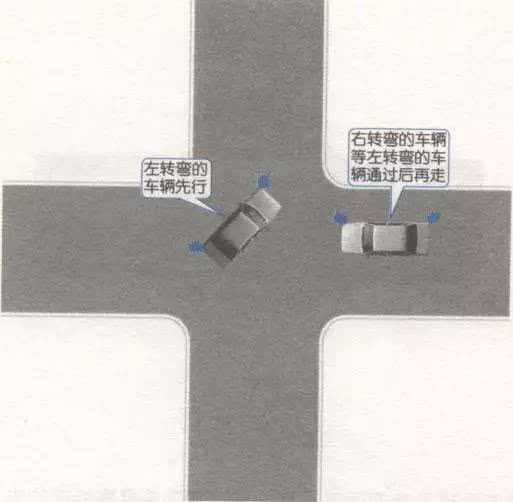

在回旋处行驶. 当其他车辆被迫进入时,只要它们具有优先权,就可以避免进入.

在回旋处行驶. 当其他车辆被迫进入时,只要它们具有优先权,就可以避免进入. 武警忠诚卫士心得体会6篇

武警忠诚卫士心得体会6篇 增加知识|如何拯救自己?不会游泳的人掉入水中后可以自救

增加知识|如何拯救自己?不会游泳的人掉入水中后可以自救 荒村听雨?荒村踽?荒村红杏?正文 第八十九章:夜寒苏(上)

荒村听雨?荒村踽?荒村红杏?正文 第八十九章:夜寒苏(上)

所谓“日本潜艇强于中国”的说法完全是无稽之谈