linux日志文件在哪 大数据集群常见问题总结(2)

电脑杂谈 发布时间:2018-02-20 23:29:49 来源:网络整理连接超时,解决办法:在hadoop-site.xml中设置dfs.datanode.socket.write.timeout=0。linux日志文件在哪

4.解决hadoop OutOfMemoryError问题:

<property>

<name>mapred.child.java.opts</name>

<value>-Xmx800M -server</value>

</property>

或者:hadoop jar jarfile [main class] -D mapred.child.java.opts=-Xmx800M

5. Hadoop java.io.IOException: Job failed! at org.apache.hadoop.mapred.

JobClient.runJob(JobClient.java:1232) while indexing.

when i use nutch1.0,get this error:

Hadoop java.io.IOException: Job failed! at org.apache.hadoop.mapred.JobClient.

runJob(JobClient.java:1232) while indexing.

解决办法:可以删除conf/log4j.properties,然后可以看到详细的错误报告。如出现的是out of memory,解决办法是在给运行主类org.apache.nutch.crawl.Crawl加上参数:-Xms64m -Xmx512m。

6. Namenode in safe mode

解决方法:执行命令bin/hadoop dfsadmin -safemode leave

7. java.net.NoRouteToHostException: No route to host

解决方法:sudo /etc/init.d/iptables stop

8.更改namenode后,在hive中运行select 依旧指向之前的namenode地址

解决办法:将metastore中的之前出现的namenode地址全部更换为现有的namenode地址

9.ERROR metadata.Hive (Hive.java:getPartitions(499)) - javax.jdo.JDODataStoreException: Required table missing : ""PARTITIONS"" in Catalog "" Schema "". JPOX requires this table to perform its persistence operations. Either your MetaData is incorrect, or you need to enable "org.jpox.autoCreateTables"

原因:就是因为在 hive-default.xml 里把 org.jpox.fixedDatastore 设置成 true 了,应该把值设为false。

10.INFO hdfs.DFSClient: Exception in createBlockOutputStream java.io.IOException:Bad connect ack with firstBadLink 192.168.1.11:50010

> INFO hdfs.DFSClient: Abandoning block blk_-8575812198227241296_1001

本文来自电脑杂谈,转载请注明本文网址:

http://www.pc-fly.com/a/ruanjian/article-86344-2.html

-

-

张航兴

张航兴请问

-

杜军舜

杜军舜比数量

-

-

冯靖贺

冯靖贺此后

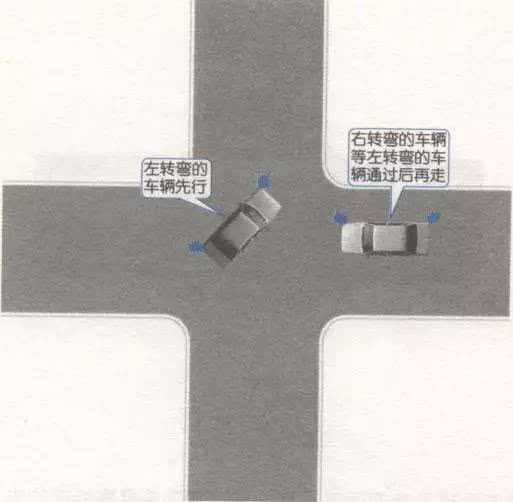

在回旋处行驶. 当其他车辆被迫进入时,只要它们具有优先权,就可以避免进入.

在回旋处行驶. 当其他车辆被迫进入时,只要它们具有优先权,就可以避免进入. 武警忠诚卫士心得体会6篇

武警忠诚卫士心得体会6篇 增加知识|如何拯救自己?不会游泳的人掉入水中后可以自救

增加知识|如何拯救自己?不会游泳的人掉入水中后可以自救 荒村听雨?荒村踽?荒村红杏?正文 第八十九章:夜寒苏(上)

荒村听雨?荒村踽?荒村红杏?正文 第八十九章:夜寒苏(上)

农民才55元能做什么